Market Overview

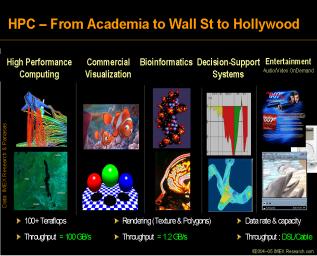

With the availability of low cost, high volume servers running open source software and utilizing newer technologies such as clustering and high performance interconnects, high performance computing is now ready to take off in many environments such as technical computing in academia and research labs to wall street for financial decisions, from health science for drug discoveries all the way to Hollywood to make surrealistic movies.

According to IMEX Research, applications market may be divided into four major market segments:

1. Online Transaction Processing (OLTP)

2. Decision Support System (DSS)

3. High Performance or Numeric Intensive Computing (HPC)

4. Data Streaming/Digital Living

|

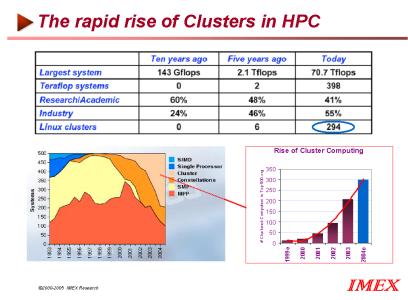

Rise of Clusters in HPC

The clustering technology orchestates low latency and high bandwidth interconnects that are necessary to make high performance computer feasible.

There has been a phenomenal rise in the use of clustering technology to build high performance computers in the last ten years. Previously the largest supercomputer boasted of 143 Gflops without the use of clustering technology costing an astounding $40 millions targeted for use by the Government National Labs. Today clustering has enabled the supercomputers to be 70.7Tflops with 294 clusters with a price tag of less than $4000 (a price reduction of 1/10000) targeting a broad spectrum of markets such as medicine, bio-informatics, aerospace, life sciences, weather forecasting, defense, movies and financial markets.

1474 Camino Robles |

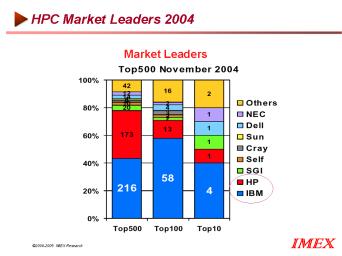

Market Leaders in HPC

IBM is the market leader in high performance computing with 44% market share and Hewlett Packard comes second with 34% shares followed by NEC and Sun. Dell by virtue of low cost direct market model is aggressively targeting the high performance computer market via the rack mount and blade server technology. Scientists and researchers have long been in forefront for building low cost high performance computers and have successfully led the way for the adoption of clustering technology

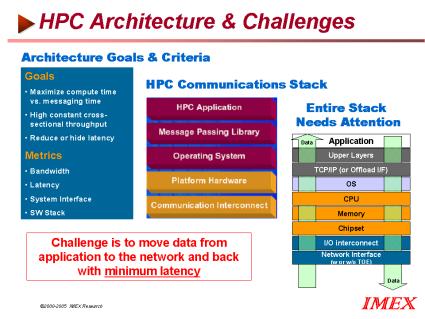

HPC Architecture and Challenges

The main goal of HPC architecture is to maximize compute time and reduce or hide latency (messaging time between cluster nodes) while keeping the highest possible cross section throughout. The challenge in HPC architecture and implementation are multi-level because the entire HPC communication stack needs optimization as shown below.

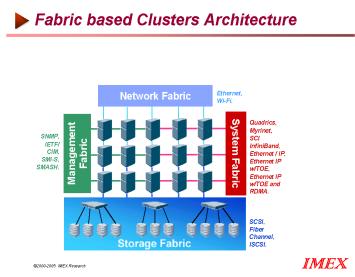

Fabric based Cluster Architecture

The fabric based architecture that interconnects multiple node relies on a myriad of pertinent fabric technologies. The choice of System Fabric (Quadrics, MYrinet,SCI, Infiniband), Network Fabric (Ethernet, Wi-Fi),Storage Fabric (SCSI,Fiber Channel, ISCSI) and Management Fabric (SNMP,IETFI,CIM,SMI-S,SMASH)pose a continuous struggle to successfully provide low latency, and high bandwidth data transfer.

The World of HPC Applications

High performance computing embraces a broad spectrum of markets and applications:

- Research and Development

Research in drug discovery, diagnostics, information-based medicine, and bioinformatics are the mainstays of Health Science. - Enterprise Optimization

Energy (Oil and gas exploration/production), Automotive, Aerospace, Health Sciences, Manufacturing(CAD,CAM,CAE), electronics - Business Analytics

Wall Street Financial Risk Management (high volume data flow and transactions), Insurance, and Interactive Compliance - Government

Scientific research, classified/defense, and weather/environmental sciences. - Entertainment

Movie making digital content creation/distribution.

Click on the following for additional information or go to http://www.imexresearch.com.

IMEX Research, 1474 Camino Robles San Jose, CA 95120 (408) 268-0800 http://www.imexresearch.com

If you wish not to receive any IT information/news from IMEX, please click here